Recent data from IBM indicates that while 96% of enterprise employees now use Generative AI tools to increase productivity, fewer than 15% of companies have a formal Acceptable Use Policy (AUP) in place.

This gap has created a massive layer of "Shadow AI" where proprietary data, financial projections, and customer information are being fed into public models like ChatGPT, Gemini, or Claude to "speed things up." Our analysis of Terms of Service confirms that consumer or free versions of these tools often retain the right to train on your data. Without a policy, you have no legal standing to discipline this behavior, and your IP is effectively leaving the building to train your competitors' models.

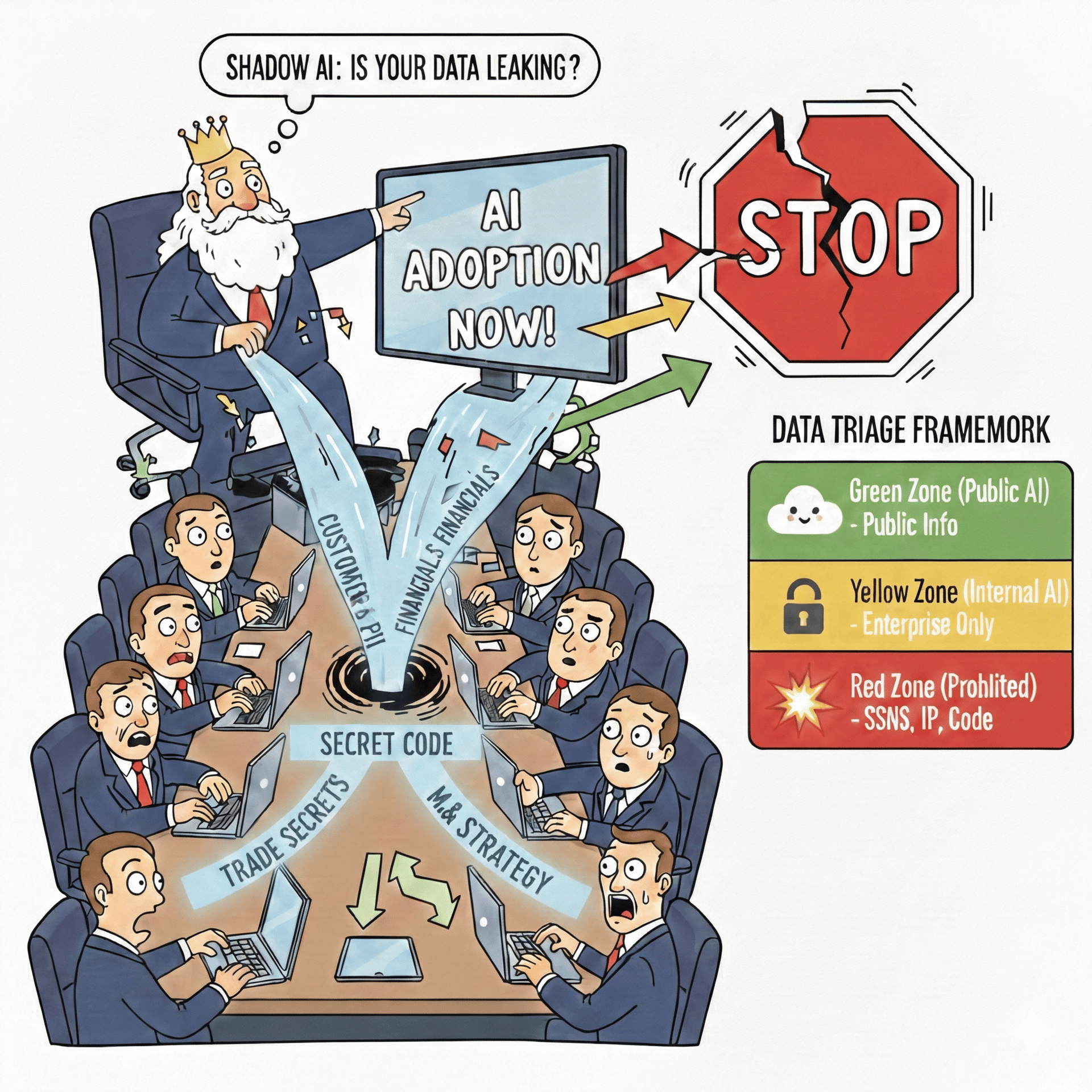

You do not need to ban AI. This will only stifle innovation and drive more underground usage. You need to "Sandbox" it. A Tiered Acceptable Use Policy is the only mechanism that balances speed with security, allowing you to categorize data into "Safe" or "AI-sharable” and "Unauthorized" or “Prohibited” designations

AI at all costs

To hinder “Shadow AI”, we suggest, your team needs a Data Triage Framework. It is a simple traffic light system that every employee can understand:

🟢 Green Zone (Public AI): Approved for use with ChatGPT/Gemini/Claude (Free online versions)

Data Allowed: Public marketing copy, brainstorming ideas, non-proprietary coding snippets, drafting public emails with no company information.

Risk: Low. This data is intended for the public domain anyway.

🟡 Yellow Zone (Internal AI): Approved ONLY for Enterprise License instances (e.g., ChatGPT Team, Microsoft 365 Copilot, Enterprise Gemini) where we have a contract ensuring "No Training" on our data.

Data Allowed: Internal memos, process documentation, non-sensitive financial data, strategic drafts.

Risk: Moderate. Safe within our enterprise tenant, but never to be pasted into a free web browser tool.

🔴 Red Zone (Prohibited): Never to be entered into any AI model, regardless of licensing.

Data: Customer PII (SSNs, emails), core IP/codebase, unreleased financial results, M&A strategy, passwords/credentials.

Risk: Critical. Exposure leads to regulatory fines (GDPR/CCPA) or loss of competitive advantage.

We analyzed the "Terms of Service" of the top 4 AI models to show exactly which ones claim ownership of your data.

OpenAI (Free): Default settings allow training on your chats, unless you opt out in the settings

Anthropic (Free): Updated terms allow training unless you opt-out.

Microsoft 365 Copilot: Commercial terms offer "Enterprise Data Protection" (No training).

Google Gemini (Free): Human reviewers may access your chats to "improve the service.", but can opt out in settings

The results might surprise you and terrify your board. The difference between "Free" and "Enterprise" isn't just features; it's who owns your IP.

Does your team lack the bandwidth to implement this? Reply to this email with "Shadow AI" in the subject line and our team will send you an example detailed framework and AI policy.

To: CTO

CC: IT Leads

Subject: Review of AI Acceptable Use Policy & Shadow AI Risk

Team,

I want to review our current posture regarding "Shadow AI" usage across the firm.

Specifically, do we currently have a written policy that clearly distinguishes between "Public" (e.g., ChatGPT) and "Private" (e.g., Enterprise AI License) usage of AI models and tools?

My concern is that without clear guardrails, we are exposing IP by default. Recent reports suggest that consumer AI tools often retain rights to train on input data, meaning our proprietary info could inadvertently become part of a public model. The legal concept of "implicit authorization" suggests that if we know this is happening and do nothing, we may be waiving our trade secret protections.

If we have one do you mind sending it to me? If not, could you prepare a draft Acceptable Use Policy (AUP)?

I want to see a "Tiered Risk" approach that defines exactly what data is permitted in public models versus what is strictly prohibited. We need to move from "Blocking" (which they will bypass) to "Sandboxing."

My intent is to enable safe experimentation, not to block innovation, but we must secure our perimeter.

Best,

[Your Name]