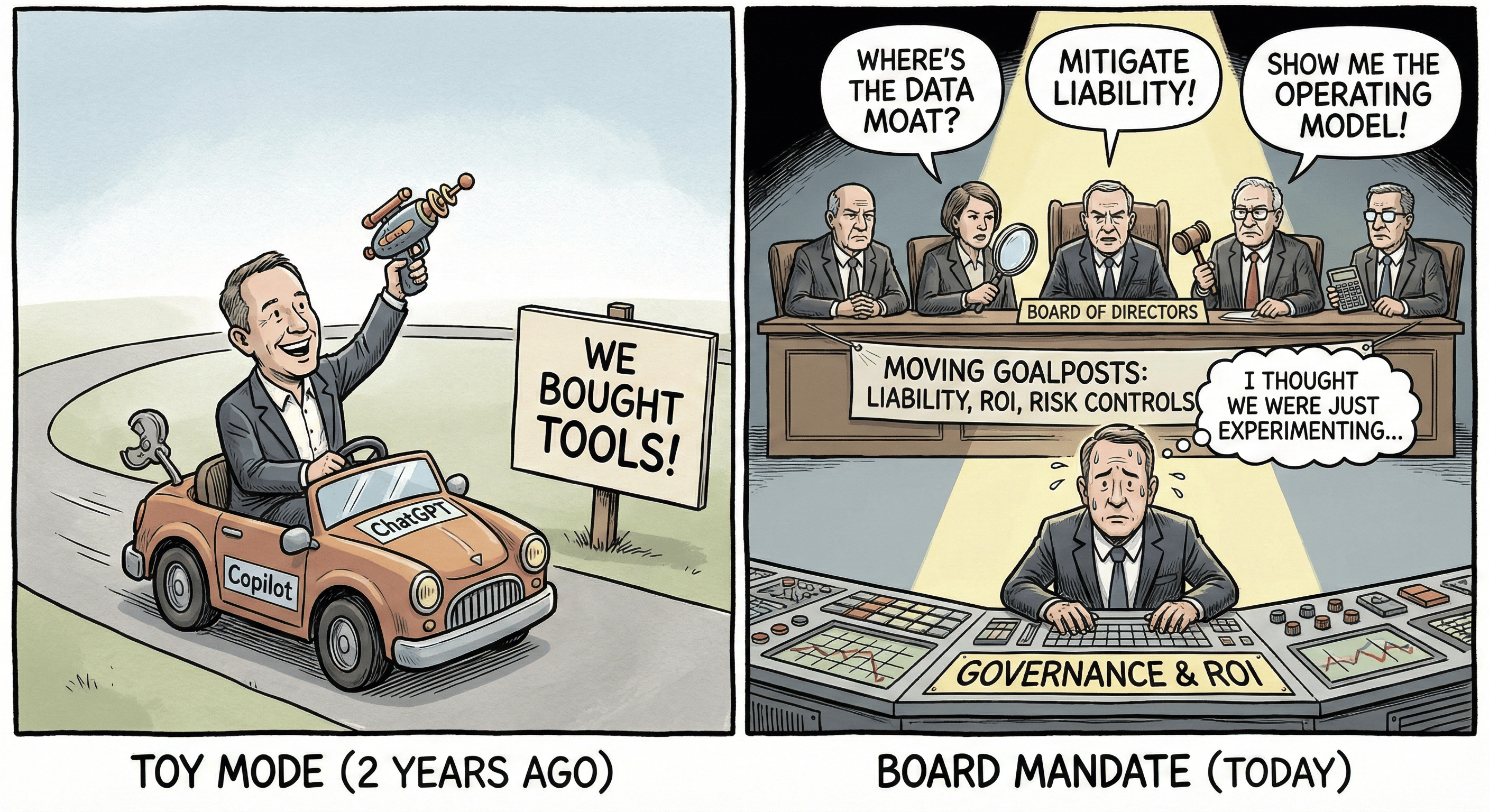

Most mid-market CEOs answer the "Strategy" question by listing tools (e.g., "We bought Copilot," "We use ChatGPT").

This answer is a red flag. It tells the Board you are in "Toy Mode." Boards know that 95% of AI pilots fail to scale because they lack operational discipline. They don't want to hear about experimentation; they want to hear about asset allocation, risk controls, and competitive moats.

To answer the "Strategy" question credible, you must stop talking about "technology" and start talking about "operating models." We recommend shifting the conversation from adoption to governance and leverage.

The "Lightweight Governance" Protocol You cannot wait for federal regulation; you must build a defensible position now. Use this 3-step protocol to answer the Board’s risk questions.

Step 1: Inventory & Triage. Create a simple register of every AI system. Tag them as High Risk (Hiring, Firing, Lending, Legal) or Low Risk (Drafting, Summarizing).

Step 2: The "Human Circuit Breaker." Institute a mandatory policy: As mentioned in our Human In The Loop Newsletter no AI output from a "High Risk" system leaves the building without a human sign-off. This is your primary liability shield for critical processes.

Step 3: The Data Moat. For core business logic, move from "Renting Intelligence" (SaaS) to "Owning Context" (RAG). Ensure your proprietary data is stored in a vector database or secure location you control, not fed directly into a public model. See our newsletter on “Shadow AI” and “AI Copyright” for details on protecting your data.

“What is your AI strategy?” is too often answered by defaulting to a list of tools or pilots. But real AI strategy is not about deploying copilots, it is about redesigning the operating model. Analysis shows that companies win when they move from isolated productivity gains to agentic workflows, from buying generic capability to building proprietary data advantage, and from reactive governance to engineered risk controls. AI strategy is ultimately about breaking the historical link between revenue growth and headcount growth (more in possible with less) without surrendering competitive differentiation or liability control. In tomorrows LinkedIn post and additional resource we explore deeper, and unpack how to shift the Board conversation from experimentation to enterprise leverage and why 2026 belongs to companies who treat AI as infrastructure, not software.

The gap between "using AI" and "having an AI Strategy" is Governance. If you are unsure where your employees are pasting company data, or which regulations (like the Colorado AI Act or EU AI Act) you are currently violating, you are operating blind. This newsletter week after week will help you address all of your key questions.

Click subscribe to not miss any upcoming newsletter. Reply “AI Strategy” to receive your first guideline for your next board meeting.

Do not try to invent the strategy alone. You need the ground truth of your data and risk exposure first. Copy and paste this email to your leadership team today to prepare for your next board meeting.

Subject: ACTION REQUIRED: Pre-Board AI Impact Assessment

Team,

In preparation for our upcoming Board meeting, I need to crystallize our position on AI beyond just the tools we are testing. Please provide me with the following by:

The "Shadow" Inventory: A candid list of all AI tools currently in use by your departments, officially approved or otherwise. We need to know our surface area.

Data Dependency: Which of our core competitive workflows (e.g., pricing, underwriting, customer support) are currently reliant on third-party models we do not control?

The "Agentic" Opportunity: Identify one specific workflow where we can move from "human-in-the-loop" to "human-on-the-loop" (autonomous agents) within 90 days to drive measurable hard ROI.

We are moving from experimentation to strategic integration. I need the raw data to build that roadmap.

Best,